In this post, I will describe a new and efficient Asynchronous Approximate Agreement($\epsilon$-agreement) protocol with only $\mathcal{O}(n^2)$ bits of communication per round. Our protocol requires honest nodes to only have binary inputs, where every honest node’s input $V_i \in {v_0,v_1}$, where both $v_0$ and $v_1$ are publicly known and $\Delta = v_1-v_0$.

Background

The Asynchronous Approximate Agreement or $\epsilon$-agreement primitive allows a set of nodes to approximately agree on a value within the convex-hull of honest nodes’ inputs. This protocol circumvents the prominent Fischer-Lynch-Patterson(FLP)-impossibility result, which states that no deterministic asynchronous protocol can achieve agreement amongst a set of nodes with at least one crashed node. Any $\epsilon$-agreement protocol must satisfy the following three properties.

- Termination: Every honest node $i$ must eventually decide a value $v_i$.

- $\epsilon$-agreement: The decided values $v_i,v_j$ of any honest nodes $i,j$ must be within $\epsilon$ distance of each other. \(|v_i-v_j| < \epsilon \forall i,j \in \{1,..,n\}\)

- Convex-Hull Validity: The decided value $v_i$ of any honest node must be within the convex-hull of honest nodes’ inputs. \(\min(\mathcal{V})\leq v_i \leq \max(\mathcal{V})\)

Abraham, Amit and Dolev’s[1] $\epsilon$-agreement protocol achieves all the three properties with an optimal resilience of $1/3$ faults and has a communication complexity of $\mathcal{O}(\kappa n^3)$ bits. The $\kappa$ factor in communication complexity results from the use of Das, Xiang, and Ren’s[2]Reliable Broadcast protocol. If instead Bracha’s RBC was used, the communication complexity of Abraham, Amit, and Dolev’s[1] $\epsilon$-agreement would be $\mathcal{O}(n^4)$ bits.

Preliminaries

We define the Asynchronous Crusader Agreement primitive, also referred to as 1-slot Proxcensus in Fitzi, Liu-Zhang, and Loss[3].

A crusader agreement protocol $\mathcal{C}$ amongst $n$ nodes must guarantee the following properties.

- Agreement: If two honest nodes decide values x and y, then either x=y or at least one of the values is $\perp$

- Liveness: All honest nodes eventually decide and then eventually terminate

- Validity: If all honest nodes have the same input x, then they must output x

Using this primitive, I build an $\epsilon$-agreement protocol.

Protocol

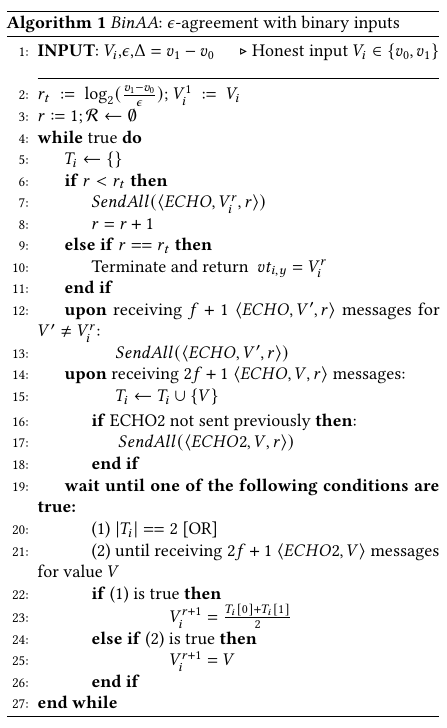

I will briefly describe the protocol in Algorithm 1. Each node $i$ broadcasts an ECHO message for its value $V_{i}$. It also broadcasts an ECHO for any other value $V’ \neq V_{i}$ for which it received $f+1$ ECHOs. For a value $v$ to receive $f+1$ ECHOs, at least one non-faulty node must have input $v$ to the protocol. Once a node receives $n-f$ ECHO messages for value $V$, it broadcasts an ECHO2 message for $V$. It waits until it receives $n-f$ ECHOs for two different values $V,V’$ to output $\perp$ or until it receives $n-f$ ECHO2 messages for a single value $V$. In the former case, it updates its state for round $r+1$ as $V_{i} = \frac{V+V’}{2}$, and in the latter case, it updates its state as $V_{i} = V$.

If a node receives $n-f$ ECHO2s for a single value $V$, then no other sensor can receive $n-f$ ECHO2s for any other value $V’ \neq V$. Therefore, after round $1$, non-faulty sensors’ values can be characterized by the following four sets: 1. {${v_{0}}$}, 2. {${v_{0},\frac{v_{0}+v_{1}}{2}}$},3. {$\frac{v_{0}+v_{1}}{2},v_{1}$}, 4. {$v_{1}$}, and all four sets have the difference between their states $\Delta_1 \leq \frac{v_{1}-v_{0}}{2}$. After $\log_{2}(\frac{v_{1}-v_{0}}{\epsilon})$ rounds, the nodes achieve $\epsilon$-agreement.

The main intuition behind the reduced communication complexity is that we simplify the node-centric broadcast phase using $n$-parallel reliable broadcasts from Abraham, Amit, and Dolev and replace it with a value-centric broadcast phase derived from Asynchronous crusader agreement. The former phase involves every node participating in the reliable broadcast of every other node, leading to an $\mathcal{O}(n^3)$ factor in communication complexity. However, since our protocol deals with a restricted domain with only two values, the value-centric phase has an $\mathcal{O}(n)$ factor of improvement over the node-centric broadcast phase.

Complexity Analysis

In each round, every node broadcasts an ECHO message for at most two values, and an ECHO2 message for at most one value. Therefore, each round of $\epsilon$-agreement through this protocol costs only $\mathcal{O}(n^2)$ messages, with each message of size $\mathcal{O}(\log(\frac{\Delta}{\epsilon}))$ bits. Therefore, $\mathcal{O}(\log(\frac{\Delta}{\epsilon}))$ rounds costs $\mathcal{O}(n^2\log^2(\frac{\Delta}{\epsilon}))$ bits overall to achieve $\epsilon$-agreement.

This communication can be reduced to $\mathcal{O}(n^2\log(\frac{\Delta}{\epsilon})\log\log(\frac{\Delta}{\epsilon}))$ bits using a small modification. In the beginning of each round $r>1$, a node broadcasts a new type of message $\langle VAL,2L/L/C/R/2R, r\rangle$ (replacing the ECHO1 message on line 7), where $L/C/R$ signify whether the node’s state value $b_{i,r}$ moved to the left by one or two spaces, stayed at $b_{i,r-1}$, or moved to the right by one or two spaces. When a node $j$ receives a message $\langle VAL,L,r\rangle$ from node $i$, it waits for all $\langle VAL,.,r_i\rangle$ messages from rounds $r_i\in{1,..,r}$. Based on this sequence of messages, $j$ deduces $i$’s state value $b_{i,r}$. Then, it counts $i$’s $\langle VAL\rangle$ message as an ECHO1 message for value $b_{i,r}$. Further, while amplifying other values using ECHO1 and ECHO2 messages, nodes use this technique to denote the value they are echoing. This technique of waiting for messages from prior rounds has been described in Abraham et al. [1] as FIFO-broadcast. The FIFO broadcast primitive delivers messages in the order that they were broadcast by the sender.

We give a brief rationale about why this technique works. We know that in each round, the range of honest state values either reduces by a $\frac{1}{2}$ fraction or collapses to $0$. Further, honest state values after each round are binary. Let $S_r = ${$b_{r,0},b_{r,1}$} be the set of honest state values in round $r$. Then, the set of values in round $r+1$ becomes one of the following four sets: $S_{r+1} =$ {$b_{r,0}$},{$b_{r,0},\frac{b_{r,0}+b_{r,1}}{2}$},{$\frac{b_{r,0}+b_{r,1}}{2},b_{r,1}$},{$b_{r,1}$}. We denote an honest node with value $b_{r,1}$ in round $r$ updating its state to $\frac{b_{r,0}+b_{r,1}}{2}$ (or shifting towards the left by $\frac{\Delta}{2^r}$) in round $r+1$ using the term $L$. Similarly, we denote an honest node updating its state from $b_{r,1}$ to $b_{r,0}$ (or shifting towards the left by $\frac{\Delta}{2^{r-1}}$) using the term $2L$. We use similar notation for a node shifting its state value towards the right. Note that a shift of $2L$ or $2R$ implies no further state shifts because of collapsed range. Therefore, using a sequence of such state shifts, a node $j$ can correctly calculate node $i$’s state value in round $r$.

The communication complexity of this updated protocol is $\mathcal{O}(n^2\log(\frac{\Delta}{\epsilon})\log\log(\frac{\Delta}{\epsilon}))$. The $\log\log(\frac{1}{\epsilon})$ factor is due to the inclusion of the round number in each message.

Proof Sketch

We first prove that if the protocol is in a bivalent state in round $r$, it must move to a bivalent or univalent state in round $r+1$. Assuming nodes start round $r$ of the protocol with values $c_0’$ and $c_1’$. From the properties of Crusader agreement, the protocol moves into state {$c_0’,\perp$} or {$c_1’,\perp$}. Honest nodes receiving \bottom update their value to $v = \frac{c_0’+c_1’}{2}$, which implies that the range of honest values reduces to $\frac{c_1’-c_0’}{2}$.

In contrast, if the state after round $r$ is univalent, the nodes have already agreed, which implies that the range of honest inputs reduces by at least a factor of $\frac{1}{2}$. Therefore, after at most $\log_2(\frac{\Delta}{\epsilon})$ rounds, the protocol achieves \approxconsensus.

References

[1] Abraham, Ittai, Yonatan Amit, and Danny Dolev. “Optimal resilience asynchronous approximate agreement.” In Principles of Distributed Systems: 8th International Conference, OPODIS 2004, Grenoble, France, December 15-17, 2004, Revised Selected Papers 8, pp. 229-239. Springer Berlin Heidelberg, 2005.

[2] Das, Sourav, Zhuolun Xiang, and Ling Ren. “Asynchronous data dissemination and its applications.” In Proceedings of the 2021 ACM SIGSAC Conference on Computer and Communications Security, pp. 2705-2721. 2021.

[3] Fitzi, Matthias, Chen-Da Liu-Zhang, and Julian Loss. “A new way to achieve round-efficient Byzantine agreement.” In Proceedings of the 2021 ACM Symposium on Principles of Distributed Computing, pp. 355-362. 2021.

]]>